Back in July 2012 I wrote a post with this same title. The concern then was device fragmentation. Teams were scrambling to cover iPhone, Android, Blackberry, and desktop browsers with a single brand and a limited headcount. Design cost money because screens multiplied faster than budgets.

14 years later the cost has not decreased. It shifted.

The screens consolidated. Responsive frameworks ate the device problem for breakfast. Component libraries made consistency cheap. And now generative AI can produce a full interface in the time it takes to type a sentence. Execution cost collapsed to near zero. Yet design still costs a fortune when it matters. The reason has nothing to do with pixels.

The First Impression Trap

AI generated screens pass the lobby test. Stakeholders see a polished mockup and nod. Cards with rounded corners. Consistent spacing. A colour palette that feels intentional. Typography that breathes. Everything sits where you expect it to sit.

That is precisely the problem. It sits where you expect it because the model learned from 10 million screens that all made the same safe choices. The output is not a design decision. It is a statistical average wearing a serif font.

Design is answers to questions a user may ask. If you never understood the question, you cannot answer it.

First impressions dissolve the moment someone tries to use the thing. A dashboard looks beautiful until a operations lead needs to find a single anomaly across 47 services at 2am. A checkout flow looks clean until a user with 3 items in cart, a coupon code, and a shipping constraint hits a wall the layout never anticipated. The screen answered questions nobody was asking and ignored the ones that mattered.

Copies Do Not Think

AI can replicate. It cannot reason about consequence.

A senior designer places a destructive action button on the far right, separated by whitespace, in a muted red. Not because a style guide said so. Because they watched a user accidentally delete a production dataset on a Tuesday afternoon in 2019 and spent the next 6 hours on a recovery call. That scar tissue informed the placement. The whitespace is not aesthetic. It is insurance.

AI reproduces patterns from training data. It cannot distinguish between a pattern that exists because it solves a real problem and a pattern that exists because everyone copied the same Dribbble shot. Both look identical in the dataset. Only one survives contact with actual users.

Generative models treat every layout as a composition problem. Arrange elements until the visual weight balances. Respect the grid. Match the palette. Ship it. What they miss is that design is not composition. Design is choreography. Every element exists in sequence, in priority, in relationship to what the user did 3 seconds ago and what they need to do next. That requires a model of the user, not a model of the layout.

Input Sets the Ceiling

Here is where the cost actually lives. Not in the rendering. In the understanding.

Hand a novice product manager a complex product and watch what happens. The requirements arrive as a list of features. “We need a dashboard. We need user management. We need analytics.” No mention of who uses it. No mention of what decision each screen enables. No mention of what happens when the data is empty, or stale, or contradictory. The designer gets a shopping list and is expected to build a restaurant.

It does not matter if the designer is world class. It does not matter if the developer can build anything. The ceiling is set by whoever defines the problem. Shallow input produces shallow output regardless of the talent downstream.

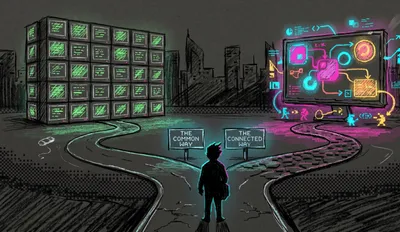

The bottleneck was never the hand that draws. It was always the mind that decides what to draw and why.// The uncomfortable truth about AI and design

We already learned this with software engineering. Garbage specifications produce garbage code no matter how senior the engineer. A spec that says “build a login page” gets you a login page. A spec that says “users with expired sessions need to re-authenticate without losing their in-progress form data, and 34% of our support tickets come from this exact failure mode” gets you something worth building. The difference is not skill. The difference is input.

Design follows the same rule. AI compressed the execution gap to nothing. What remains is the comprehension gap, and that gap is widening because everyone now assumes the hard part is over.

Every Element Is an Answer

A well designed screen is a conversation that already happened. The designer sat with the problem long enough to anticipate what the user would need, when they would need it, and what would confuse them along the way. Every label, every hierarchy, every piece of progressive disclosure exists because someone asked “what will the user be thinking at this exact moment?”

AI does not ask that question. It cannot. It has no model of the user’s state of mind. It has no theory of what information reduces anxiety versus what information creates it. It places a status indicator on a page because status indicators appear on similar pages in the training data. Whether that indicator answers the user’s actual concern, or buries it under 6 other metrics they do not care about, is a question the model never considered.

Pick any screen in your product. For every element visible, write down the user question it answers. If you cannot articulate the question, the element is decoration. If 3 elements answer the same question, 2 of them are noise. This exercise takes 15 minutes and reveals more than any design review.

This is the difference between a designer who has spent years watching people struggle with interfaces and a model that has spent years ingesting the screenshots of those interfaces. One carries the failures. The other carries the pixels.

The Real Cost

The cost of design in 2026 is not Figma licenses or developer hours or AI subscriptions. It is the organizational willingness to understand the problem before generating the solution. That means research. That means sitting with users. That means product managers who can articulate not just what to build but why it matters to the person on the other side of the screen.

AI made the rendering free. It made the thinking more expensive by comparison, because now there is no execution bottleneck to hide behind. When a screen takes 4 weeks to build, nobody notices that the requirements were shallow. The timeline absorbs the confusion. When a screen takes 4 seconds to generate, the shallowness is immediately visible. There is nowhere left to hide.

Every company that treats AI as a way to skip the understanding phase will produce interfaces that look finished and feel hollow. Every company that uses AI after doing the hard work of comprehension will produce interfaces faster than ever before, interfaces that actually answer the questions their users came to ask.

Which side of that line are you building on?

// SENSOR_DATA_OVERLAY: FIELD_INTENSITY 0.92Hz

// "The design isn't just a shell; it's a sensory interface for the model's weights."